Building an AI System Taught Me It’s Not About the Model

AI is everywhere right now. New models, new benchmarks, new capabilities every week.

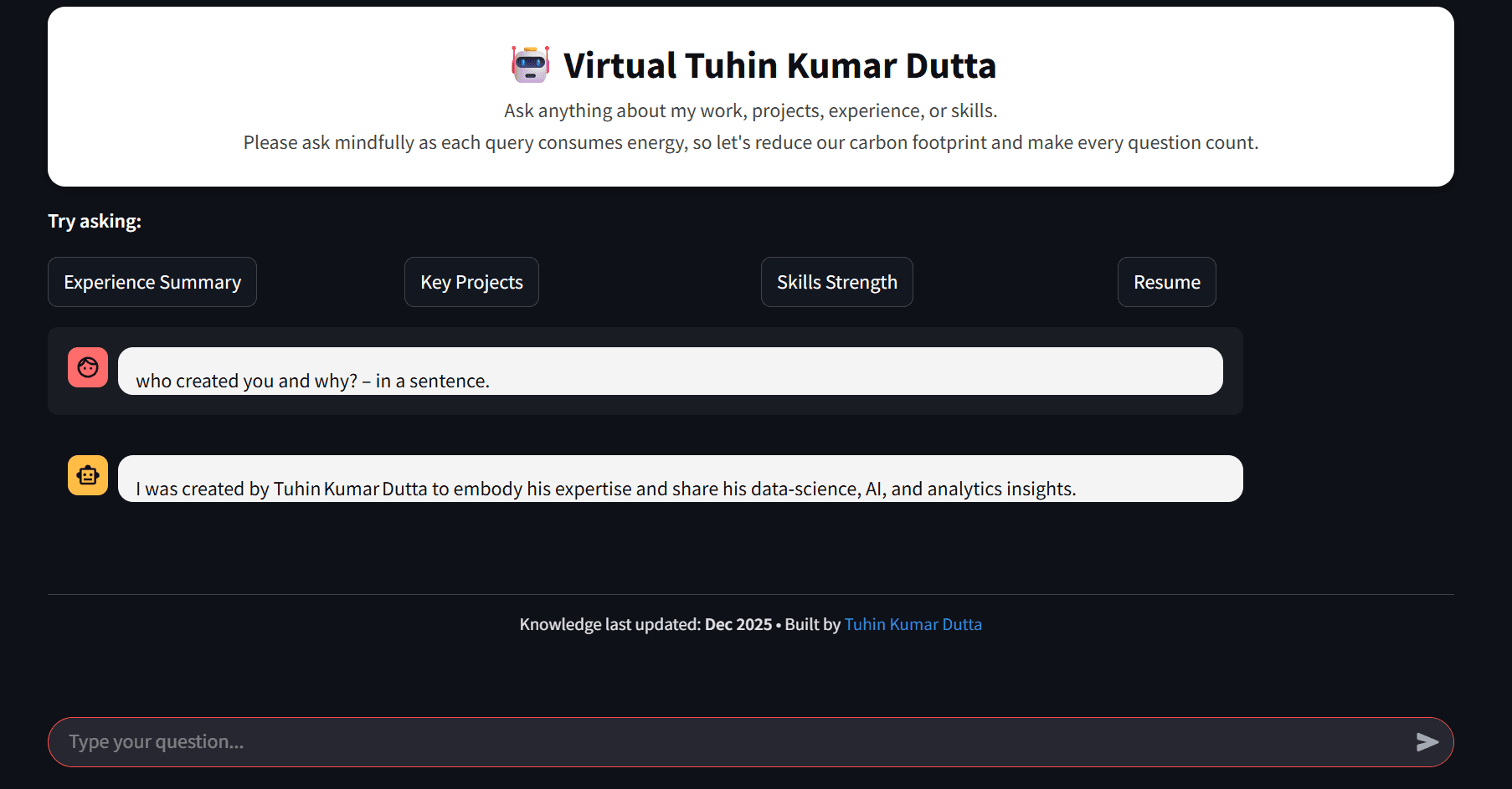

I decode data, craft AI solutions, and write about everything from algorithms to analytics. Here to share what I learn and learn from what I share. 🚀 Data Scientist | AI Enthusiast | Building intelligent systems & simplifying complexity through code and curiosity. Sharing insights, projects, and deep dives in ML, data, and innovation.

But here’s the uncomfortable truth:

Using an LLM API is not impressive anymore.

Anyone can do it.

And honestly, most people are doing the same thing.

Call an API → write a prompt → get a response.

That’s not system building. That’s just usage.

The Realization

At some point, it hit me:

There’s nothing creative about just using an LLM API.

Unless you’re training or fine-tuning models yourself, you’re operating at the same level as everyone else.

The real differentiation is not the model.

It’s everything around it.

How you structure the system

How you control execution

How you manage data flow

How you handle reliability, cost, and scale

That’s where actual engineering begins.

The Problem I Wanted to Solve

I started with a simple but frustrating problem:

Getting reliable, unbiased, and well-researched news is hard.

Not just headlines—but actual understanding.

Things get messy quickly:

News is scattered across sources

Bias is everywhere

Verifying authenticity takes effort

Exploring a topic deeply requires jumping across multiple links

Now try answering something like:

“What are the latest global developments in AI regulation, and what do they actually mean?”

This is not a single search.

This is a multi-step research process.

You need to:

Discover relevant articles

Extract sources and links

Read and validate content

Follow deeper references

Synthesize everything into a coherent answer

This is where naive “LLM + prompt” approaches fail.

The Shift in Thinking

I stopped thinking:

“How do I get a better answer from the model?”

And started thinking:

“How do I build a system that can arrive at a better answer?”

That’s a completely different problem.

The System I’m Building

Instead of a single AI component, I broke the system into layers.

🧠 Agent (Reasoning Layer)

Handles:

Decision making

Multi-step execution

When to fetch data

How to synthesize results

⚙️ MCP Server (Execution Layer)

Handles:

Fetching latest news

Scraping websites

Returning structured and unstructured data

🚪 Gateway (Control Layer — in progress)

Under development...

Handles:

Authentication (JWT)

Rate limiting

User access control

System exposure

🗄️ State Layer

- PostgreSQL for conversation and session tracking

Process Flow

How It Fits Together

At a high level:

The agent thinks

The MCP server executes

The gateway controls

The database remembers

Why This Separation Matters

This is where things started to click.

Once responsibilities are separated:

You can scale components independently

You can secure the system properly

You can control cost and abuse

You can evolve each part without breaking everything

Most “AI projects” skip this entirely.

That’s why they don’t go beyond demos.

Why I’m Building the Gateway in Go

I could have used Python again. But, I didn’t.

Because this layer is different.

This is not about AI.

This is about control and infrastructure.

Go fits better here:

Lightweight and fast

Strong concurrency model

Ideal for API gateways and control services

This was less about learning a new language, and more about choosing the right tool for the role.

What This Really Is

This is not just a “news agent”.

It’s evolving into:

A modular LLM system for controlled, iterative research workflows

Where:

reasoning is separated from execution

execution is separated from control

and everything is designed to scale

What I Learned

A few things became very clear during this process:

LLMs are the easiest part of the system

System design is where the real complexity lies

Control and safety are not optional

Separation of concerns is not over-engineering—it’s necessary

What’s Next

Completing the Go-based gateway

Integrating all components

Deploying the system on AWS (likely ECS)

Building a UI layer on top

This started as an AI project.

It turned into a system design exercise.

And honestly, that’s where the real learning happened.