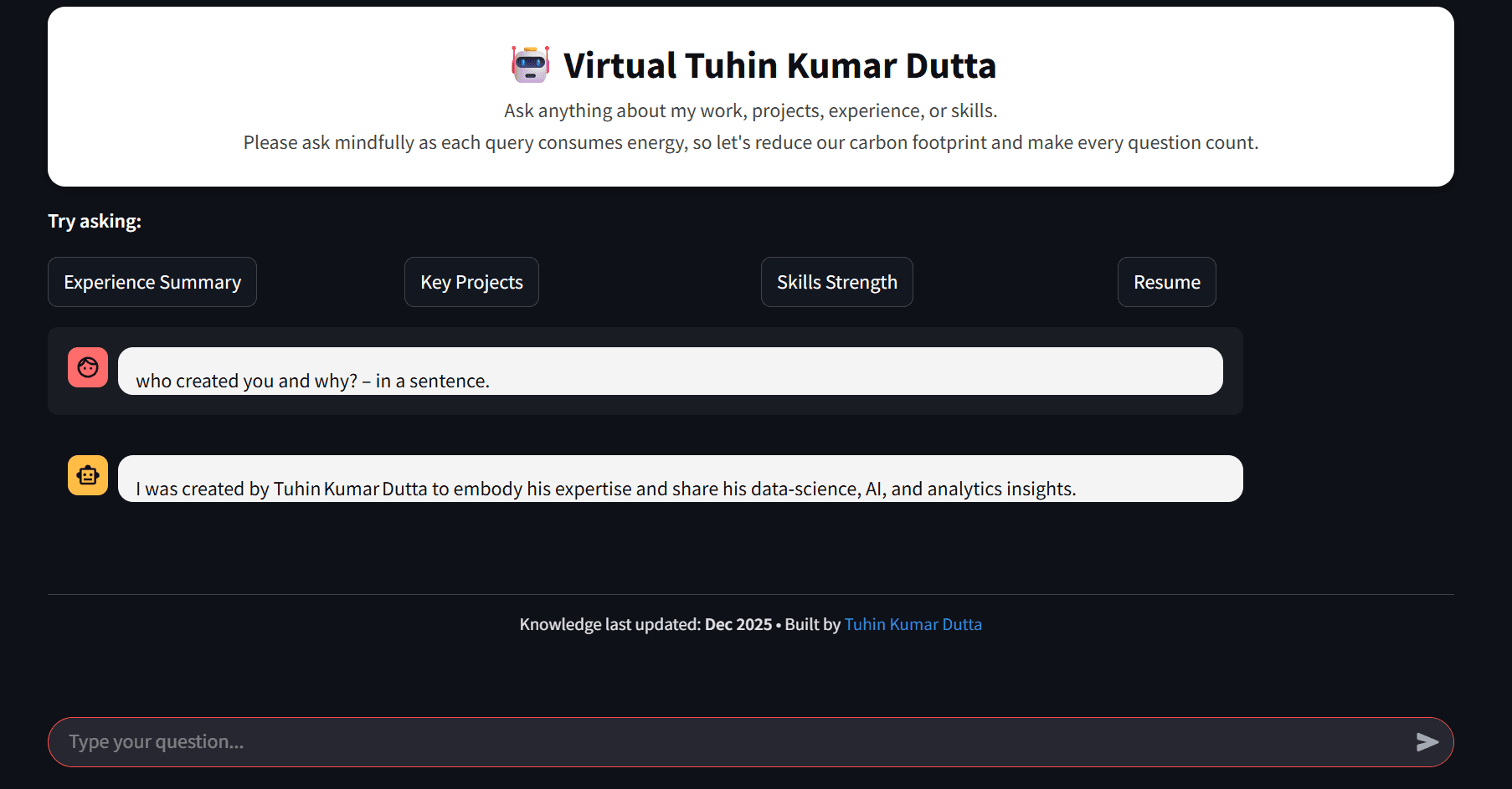

Zenalyze: My AI-Assisted Data Analysis Tool (And Why I Built It)

Let AI handle the boilerplate while you focus on the fun part of analysis.

I decode data, craft AI solutions, and write about everything from algorithms to analytics. Here to share what I learn and learn from what I share. 🚀 Data Scientist | AI Enthusiast | Building intelligent systems & simplifying complexity through code and curiosity. Sharing insights, projects, and deep dives in ML, data, and innovation.

Most AI “data analysis” tools today fall into two groups:

They pretend to analyze your data but don’t actually run code.

They demand you upload your data to some cloud black box.

Neither works for real-world analytics.

I wanted something different.

Something that could sit right in my local environment, understand my tables, generate real Python code, execute it, and help me explore data the same way an actual teammate would.

That’s where Zenalyze came from — a lightweight package that turns LLMs into a practical coding partner without ever exposing your actual data values.

Let me walk you through the motivation, design thinking, and how it fits into a real workflow.

🧩 The Problem I Wanted to Solve

Anyone working with Pandas or PySpark knows the cycle:

Load data

Look at shapes, missing values, weird fields

Write a bunch of boilerplate

Rinse and repeat for every analysis step

And every time you want to try something new, you end up rewriting the same code:

df.groupby(...).agg(...)

df.merge(...)

df.plot(...)

I wanted a tool that handled this repetitive side of analysis, while still letting me remain in control of the code. Something that generates real Python, runs in my own environment, and behaves predictably.

🎯 The Motivation Behind Zenalyze

A few core ideas shaped the project:

1. LLMs should help you code, not replace your environment

I didn’t want a chatbot that tells me what could work.

I wanted a companion that writes actual code I can run right away.

2. Your data never leaves your machine

If you’re analyzing customer revenue, fraud records, supply chain data, medical outcomes — the last thing you want is your rows flying off into the internet.

Zenalyze only sends metadata, not data.

3. History-aware analysis

LLMs forget.

Data analysts don’t have time to babysit them.

Zenalyze:

tracks every step

remembers derived columns

summarizes past actions

reuses existing variables

never re-imports Pandas/Spark unnecessarily

So the conversation stays consistent, and the code becomes cleaner over time.

4. Make the experience fun

I didn’t want another “heavy enterprise tool”.

Just a friendly, intelligent coding buddy in my notebook.

🔐 Security: Close to Your Data, Never Inside It

One thing I was very firm about:

Zenalyze should never see raw data.

And it doesn’t.

It only extracts and uses:

column names

descriptions

data types

row/column counts

null percentages

high-level distributions

patterns

derived fields created in earlier steps

This is enough to provide context for the LLM to generate correct code, but not enough to reveal anything sensitive.

Think of it as letting someone read your database schema without giving them access to the rows.

But use it responsibly.

Even though Zenalyze never touches actual records, good practice is to run it in an isolated and monitored environment:

Jupyter inside a virtual environment

Controlled outbound/inbound network rules

No access to production systems

Zero trust toward external LLMs you didn’t configure

Because yes — while Zenalyze won’t misbehave on its own, a malicious or rogue LLM can try to generate harmful code.

Not likely unless someone purposely built an LLM for chaos, but still worth mentioning.

Smart tools deserve smart environments.

🤝 What Zenalyze Actually Does

When you interact with it:

zen.do("calculate total revenue per customer")

It does a few things behind the scenes:

builds a detailed prompt with metadata

injects the correct dataset references

generates the Python code

executes the code right in your environment

saves the result as a variable

remembers what you just did

lets you ask follow-up questions through the buddy:

zen.buddy("Explain what we did in the last step")

It’s smooth, predictable, and feels like working with a junior analyst who never gets tired.

🧘 Why It's Called Zenalyze

Because the tool’s job is to take the chaotic part of exploratory analysis — the constant back-and-forth, the rewriting, the checking, the clutter — and make it calm, clean, and focused.

Data work shouldn’t feel like fighting your tools.

It should feel like thinking clearly.

That’s the vibe.

🛠️ Setup & Environment Notes

Before we get into the demo, a few practical reminders:

Always use a

.envfile for API keysKeep your environment isolated (venv/conda)

Monitor outbound connections

Use secure LLM providers you trust

Keep datasets local or on controlled Spark clusters

Zenalyze integrates tightly with Pandas and PySpark, so as long as your environment is tidy, the experience will be clean.

📦 Installation

Once the package is on PyPI:

pip install zenalyze

Or directly from GitHub:

pip install git+https://github.com/tuhindutta/Zenalyze.git

🚀 Demo Time

Now let’s actually use Zenalyze and see what it feels like in a real environment.

Don’t worry — this part is straightforward. No complicated infra, no scary configs.

Just a clean Python setup and a couple of environment variables.

1️⃣ Create a Virtual Environment

Always start in a clean workspace. It keeps things tidy and avoids package mess.

python -m venv .venv

source .venv/bin/activate # Mac/Linux

# or

.\.venv\Scripts\activate # Windows

You should now see (.venv) in your terminal prompt.

2️⃣ Install Zenalyze

If you installed from GitHub:

pip install git+https://github.com/tuhindutta/Zenalyze.git

Once it's on PyPI, you'll switch to:

pip install zenalyze

3️⃣ Add Your Environment Variables

Zenalyze uses three environment variables.

You can put them in a .env file, export them directly, or load them through your preferred method.

Required / Optional Env Variables

| Variable | Purpose | Default |

MODEL | Main LLM for code generation | openai/gpt-oss-120b |

GROQ_API_KEY | API key for your LLM provider | none (must provide if using Groq) |

BUDDY_MODEL | LLM for natural-language buddy responses | openai/gpt-oss-120b |

CODE_SUMMARIZER_MODEL | LLM for summarizing long code histories | openai/gpt-oss-120b |

Example .env file

Create a file named .env in your project folder:

MODEL=openai/gpt-oss-120b

BUDDY_MODEL=openai/gpt-oss-120b

CODE_SUMMARIZER_MODEL=openai/gpt-oss-120b

GROQ_API_KEY=your_groq_key_here

Load it using python-dotenv (optional but convenient)

pip install python-dotenv

In a notebook or script:

from dotenv import load_dotenv

load_dotenv()

And you’re good to go.

4️⃣Prepare Your Data Folder (and Optional Description File)

Let’s set up the data Zenalyze will work with.

Start by creating a simple ./data directory and drop in a few CSV or Excel files.

Example structure:

project/

├── .env

├── demo.ipynb

└── data/

├── customers.csv

├── orders.csv

└── desc.json // optional but highly recommended (discussed below)

Zenalyze will automatically scan this folder, load the files, and extract metadata like column names, dtypes, null percentages, and patterns.

That’s enough for it to start generating clean, context-aware analysis code.

⭐ Optional but Highly Recommended: Add a desc.json File

If you want Zenalyze to understand what your tables actually represent rather than just their structure, you can provide a desc.json file in the working directory.

This file lets you describe, in your own words:

what each table means

business/domain context

what each column represents

any notes you'd want an analyst to know

There’s no strict formatting rule — you can phrase descriptions however you prefer.

The only requirement is that the top-level keys match your table names without file extensions.

For example:

{

"customers": {

"data_desc": "customer master table",

"columns_desc": {

"customer_id": "unique customer identifier",

"region": "geographical region"

}

},

"orders": {

"data_desc": "transaction-level order data",

"columns_desc": {

"order_id": "unique id for each order",

"amount": "order total value"

}

}

}

Name this file exactly as desc.json and place inside data/.

🤝 Don’t Want to Write It Manually?

Zenalyze can generate a template for you.

Once Zenalyze is initialized, just run:

zen.create_description_template_file(forced=True)

This will create a desc.json template file inside the appropriate destination — you only need to fill in the details and reinitialize the Zenalyze instance.

5️⃣ Initialize Zenalyze

Fire up Jupyter Notebook and inside your notebook:

from zenalyze import create_zenalyze_object_with_env_var_and_last5_hist

zen = create_zenalyze_object_with_env_var_and_last5_hist(globals(), "./data")

This does a lot for you:

loads your datasets

extracts metadata

sets up history retention

configures the LLM models

prepares your analysis environment

You'll now have variables like customers, orders, etc. injected into your session automatically.

Demo Notebook

🎁 Final Thoughts

Zenalyze isn’t meant to be another giant enterprise tool with a 100-page manual.

It’s meant to be:

simple

lightweight

developer-friendly

safe

genuinely helpful

If it makes data exploration even a little bit smoother, cleaner, or more fun — it’s doing its job.

And this is only the beginning.